One of MySQL features I usually like to exploit is covering index. Simply, if all fields required for a query are contained in an index, there’s no need to access table row somewhere else. In MyISAM that would mean not going to MYD file. Inside InnoDB data is clustered together with PK (another lovely feature) and secondary indexes contain PK values, so it is one B-Tree less to traverse too.

I’ll take a real-life example where it really mattered. In Wikipedia for English language only we have a ‘revisions’ table with 100m records. The PK is (page_id, revision_id), so any per-page operations (say, viewing complete history of changes) would read data clustered together in single block. Now the often used operation is to check user contributions, which would of course have an index (user_id, timestamp) and use it for traversing all revisions made by particular user.

In such case, if user is not centered on single page (of course, there’re many of these), going after entries in primary index will have to read (in worst case) 16k page from disk (and maybe a bigger stripe from RAID) for every 150-byte row that may be in there. In order to read information about 10000 revisions one would end up reading 200MB of data (and having 10000 seeks). Now simply by adding a covering index it reduces number of seeks required to 100 seeks (and reads just 2MB of data).

In our case we simply added an index on (user, timestamp, page, variousmetadata, comments, etc) – simply, all fields that were in table. It made a complete copy of 16GB table, but on the other hand, RAM costs per gigabyte are bigger than disk storage.

It may be not an issue in environments, where primary key (and table data) is already all cached in memory. Accessing a record in a page, once it is already in memory, is very cheap, compared to reading the page from disk. Of course, this makes a copy of table data, but really removes I/O saturation, especially if data is very cold.

Of course, still being on tight budget, we saved some disk space here too. Simply putting such wide index on single slave and not on others, then sending all queries requiring such index to particular slave did solve many resource issues. Maintaining secondary indexes inside InnoDB is not that expensive – all writes are delayed and go to ‘adaptive hash index’, and transaction logs contain just information about a single copy of a row.

Once we will go over 50 (or 20 :) DB servers, we may probably want to think about making such index changes and load balancing more automatic, but for now solving edge cases this way seems proper.

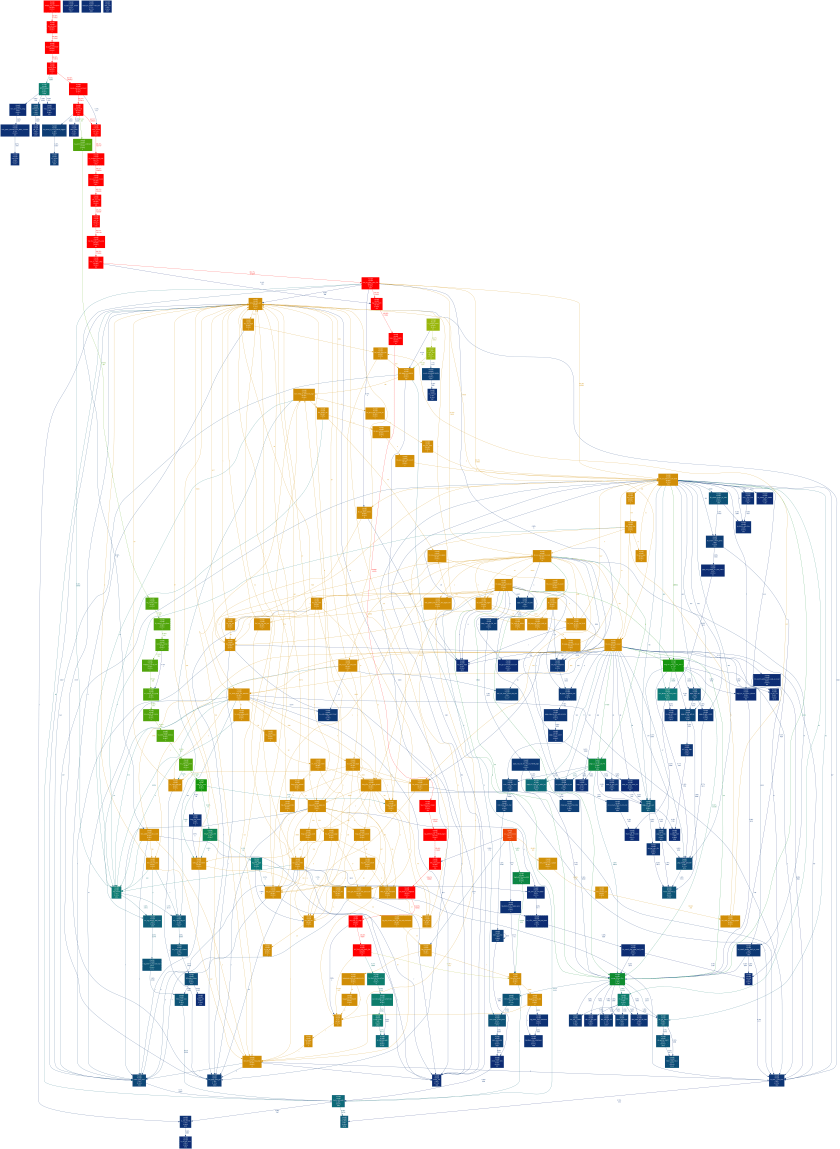

And here is comparison of two DB servers, one with covering and one with simple (just for WHERE conditions) index:

db4 mysql> select sum(rev_minor_edit) from revision

where rev_user_text='Test user';

1 row in set (21.73 sec)

db6 mysql> select sum(rev_minor_edit) from revision

where rev_user_text='Test user';

1 row in set (2.30 sec)

Both servers did read completely cold data (though they were in working state with warmed up buffers), and repeating of queries did provide not that different results (both servers executed under a second). Of course, 200MB of pages in buffer displaced possibly useful information…

Covering indexes are really powerful tool in environments where cold non-buffered data exists, and yet again prove the point, that access patterns of applications are very important when designing any schema or indexes. It is really one of major issues I expect from a storage engine (or DBMS in general) for any bigger data environments.